A Modest Proposal for Post-NISQ Quantum Computing Based on Near-perfect Qubits

NISQ quantum computers cannot handle more than relatively trivial quantum algorithms, largely due to low qubit fidelity — noise, and the grand vision of full quantum error correction is too distant a future, if ever. This informal paper explores the issues and how to transcend them to achieve what I call post-NISQ quantum computing, which may in fact usher in the era of practical quantum computing, using near-perfect qubits rather than waiting for full quantum error correction.

Topics discussed in this informal paper:

- Quick summary of what’s wrong with current (NISQ) quantum computers

- In a nutshell

- Caveat: This informal paper relates only to general-purpose quantum computers

- What is NISQ?

- Three nines of qubit fidelity appears to be the practical limit for NISQ, even a barrier

- Three nines of qubit fidelity may be the gateway to post-NISQ quantum computing

- Traditional view of beyond NISQ

- The essential technical limitations of NISQ

- The negative consequences of the limitations of NISQ

- Qubit count is NOT an issue, at this stage

- But trapped-ion quantum computers DO need more qubits

- And quantum error correction would need a LOT more qubits

- The grand (unfulfilled) promise of quantum error correction (QEC)

- The path to practical quantum computing

- What is post-NISQ quantum computing?

- Near-perfect qubits as the path to practical quantum computing

- What are nines of qubit fidelity?

- 3.5 to four nines should be the primary initial target for qubit fidelity

- If the qubit error rate is low enough it shouldn’t be considered noisy — or NISQ

- Circuit repetitions (shots) to compensate for lingering errors

- Error mitigation and error suppression

- But the goal of this proposal is to reduce and minimize the need for error mitigation

- Full any-to-any qubit connectivity is essential

- But trapped-ion qubits already have full qubit connectivity

- Coherence time and gate execution time will eventually be critical gating factors, but not yet

- Must enable larger and more complex quantum circuits to achieve practical quantum computing

- Target of 2,500 gates for maximum circuit size for a basic practical quantum computer

- Dozens, not hundreds, of qubits are a more practical goal for the next stage of quantum computing

- Target 48 fully-connected near-perfect qubits as the sweet spot goal for a basic practical quantum computer

- Terminology for post-NISQ quantum computers — NPSSQ, NPISQ, and NPLSQ

- Expectations for significant quantum advantage

- Must deliver substantial real value to the organization

- Post-NISQ quantum computing should enable The ENIAC Moment

- This proposal is independent of the qubit technology

- Timeframe of next two to seven years

- Multiple stages of post-NISQ quantum computing

- How long might the post-NISQ era last? Until it runs out of steam

- What might come after the post-NISQ era? Unknown

- What about software and tools for developers?

- What to do until post-NISQ quantum computers arrive? Use a simulator up to 32 to 50 qubits

- What to avoid while waiting for post-NISQ quantum computers

- Risk of a severe Quantum Winter in a few years

- Physical qubits, not perfect logical qubits

- Is near-perfect still noisy (NISQ) or truly post-NISQ?

- Potential for minimalist quantum error correction

- Future for NISQ is indeed bleak

- NISQ is dead, NISQ is a dying dead end, and NISQ no longer has a bright future or any potential for being a practical quantum computer

- Conclusion

Quick summary of what’s wrong with current (NISQ) quantum computers

Despite the tremendous advances in quantum computing over the past decade, current quantum computers, so-called NISQ devices, suffer a number of fatal limitations, in short, these include:

- Qubits and gates are too noisy. Limits size of quantum algorithms and circuits before errors accumulate to render the results unusable. Three nines of qubit fidelity appears to be the practical limit for NISQ, even a barrier.

- Coherence time is too short. Limiting the size of quantum algorithms and quantum circuits.

- The size of quantum algorithms and quantum circuits is too limited.

- Very limited qubit connectivity also severely limits the size of quantum algorithms and quantum circuits.

- Lack of fine granularity of phase and probability amplitude limits the complexity of quantum algorithms and circuits. Especially quantum Fourier transforms and quantum phase estimation.

The net effect of these technical limitations is that:

- The effort required to cope with and work around these technical limitations dramatically limits productivity and ability to produce working quantum applications.

- The limitations of the technology limit the size, complexity, and usefulness of quantum algorithms and quantum applications.

In a nutshell

- Current NISQ quantum computers are not well-suited for practical quantum computing. Too noisy and don’t support larger quantum circuits.

- Post-NISQ is practical quantum computing. By definition.

- Full quantum error correction is impractical in the near term. And maybe even in the longer term.

- Near-perfect qubits are a critical gating factor to achieving practical quantum computing. They obviate the need for full quantum error correction for many applications.

- Dramatically reduces and minimizes the need for error mitigation.

- Three nines of qubit fidelity appears to be the practical limit for NISQ, even a barrier.

- Three nines of qubit fidelity may be the gateway to post-NISQ quantum computing.

- Full any-to-any qubit connectivity is essential. Especially for larger quantum circuits.

- Larger and more complex quantum circuits and quantum algorithms must be supported.

- Longer coherence time is essential.

- Shorter gate execution time is essential.

- Higher qubit measurement fidelity is essential.

- Fine granularity of phase and probability amplitude is essential. Especially to support non-trivial quantum Fourier transforms and quantum phase estimation, such as is needed for quantum computational chemistry.

- 3.5 nines of qubit fidelity, fully connected, and able to execute 2,500 gates should be a good start for practical quantum computing.

- Replace NISQ with NPSSQ, NPISQ, and NPLSQ to be post-NISQ. All using near-perfect qubits (NP), and representing small scale (SS — less than 50 qubits), intermediate scale (IS — 50 to hundred of qubits), and large scale (LS — over 1,000 qubits) qubit counts.

- Expect significant quantum advantage. 1,000,000X a classical solution.

- 48 fully-connected near-perfect qubits may be the sweet spot goal for a basic practical quantum computer.

- Must deliver substantial real value to the organization. The technical metrics don’t really matter.

- Post-NISQ quantum computing should enable The ENIAC Moment. The first moment when a quantum computer is able to demonstrate a production-scale quantum application achieving some significant level of quantum advantage.

- This proposal is independent of the qubit technology. Should apply equally well to superconducting transmon qubits, trapped-ion qubits, semiconductor spin qubits, or other qubit technologies, but the focus is on general-purpose quantum computers, not more specialized forms of quantum computing. At present, the latter includes quantum annealing systems, neutral-atom qubits, and photonic quantum computers, including boson sampling devices.

- Timeframe of next two to seven years.

- What to do until then? Use a simulator up to 32 to 50 qubits.

- How long might the post-NISQ era last? Until it runs out of steam.

- What might come after the post-NISQ era? Unknown.

- This proposal wouldn’t enable all envisioned quantum applications, but a reasonable fraction, a lot better than current and near-term NISQ quantum computers.

- And as qubit fidelity, coherence time, and gate execution time continue to evolve in the coming years, incrementally more quantum applications would be supported.

- Future for NISQ is indeed bleak.

- NISQ is dead, NISQ is a dying dead end, and NISQ no longer has a bright future or any potential for being a practical quantum computer.

- Let’s go post-NISQ quantum computing! Based on near-perfect qubits, not full quantum error correction.

Caveat: This informal paper relates only to general-purpose quantum computers

This informal paper relates only to general-purpose quantum computers, sometimes referred to gate-based, circuit-based, or digital, which are at least relatively compatible from a programming model perspective, not to be confused with:

- Quantum annealing systems. D-Wave.

- Single-function quantum computers.

- Special-purpose quantum computers.

- Photonic quantum computers. Or boson sampling devices.

- Neutral-atom quantum computers operating in analog mode. Unclear if digital or gate mode is truly general purpose or compatible with other general-purpose quantum computers.

- Physics simulators. Custom-designed, special-purpose quantum computing device designed to address a specific physics problem. Not a general-purpose quantum computer. Debatable whether it should even be called a quantum computer, but it is a specialized quantum computing device.

- Software-configurable physics experiments. A step up from task-oriented physics simulators, these physics experiments are programmable or at least configurable to some degree, but simply in terms of varying the physics experiment, not intending to offer a general-purpose quantum computing capability as is the case with gate-based superconducting transmon qubits or trapped-ion qubits. Current neutral-atom quantum computers, typically running in so-called analog processing mode, are effectively software-configurable physics experiments.

- Anything else which is not programmed in a manner comparable to a general-purpose quantum computer.

This is not to say that such alternative approaches might not deliver significant value, just that such approaches do not constitute general-purpose quantum computing, which is my own personal primary focus, and seems to have the promise for achieving some reasonable fraction of the many grand promises made for quantum computing for the widest range of applications.

For more on the definition and nuances of a general-purpose quantum computer, see my informal paper:

- What Is a General-Purpose Quantum Computer?

- https://jackkrupansky.medium.com/what-is-a-general-purpose-quantum-computer-9da348c89e71

What is NISQ?

We refer to NISQ, NISQ device, NISQ quantum computer, and the NISQ era, but what does NISQ really mean?

The term NISQ was coined by CalTech Professor John Preskill in 2018 to refer to Noisy Intermediate-Scale Quantum devices or computers:

The “N” of NISQ means that the qubits are noisy, meaning that they have trouble maintaining their correct state over any extended period of time and operations and measurements of them are problematic. Preskill doesn’t offer any definitive threshold or range for how noisy qubits could be, but there is one reference to an error rate of 0.1% and another reference to an error rate of 1%. I infer from this that an error rate below 0.1% would not a priori be considered noisy.

Three nines of qubit fidelity appears to be the practical limit for NISQ, even a barrier

Observing the quantum computing sector over the past two years, it in fact appears that an error rate of 0.1% — three nines of qubit fidelity — almost seems to be a virtually impenetrable barrier for NISQ quantum computers. IBM has suggested that their Heron processor due late in 2023 should have three nines of qubit fidelity. But, so far, nobody is talking about achieving qubit fidelity above three nines this year, next year, or whenever. Actually, Atlantic Quantum is talking about a significant improvement, and it wouldn’t surprise me if some other vendors achieve three nines or slightly higher, but for now, three nines appears to be the practical limit.

Three nines of qubit fidelity may be the gateway to post-NISQ quantum computing

So, I take the position that three nines may indeed be the practical limit for NISQ, and may be the gateway to near-perfect qubits and near-perfect quantum computers, even practical quantum computers, and what this informal paper calls post-NISQ quantum computing.

That is not to say that three nines or 3.25 nines is absolutely post-NISQ or practical quantum computing, just that the question and evaluation of practical quantum computing becomes much more relevant at that stage.

I still lean towards something reasonably higher than three nines to really mark the advent of post-NISQ quantum computing. Four nines would definitely do the trick, maybe 3.75 nines, and maybe even 3.5 nines. Sure, 3.25 nines might also work, and may work for some applications, but it’s not a slam dunk at this juncture.

Regardless, three nines of qubit fidelity is at least a trigger for consideration and evaluation of whether the qubits should still be considered noisy and whether the quantum computer should still be considered NISQ or might indeed be post-NISQ.

In addition, qubit fidelity alone will not be sufficient — full any-to-any qubit connectivity will be required, as well as greater coherence time and/or shorter gate execution time to assure significantly larger and deeper quantum circuits, and fine granularity of phase and probability amplitude to support non-trivial quantum Fourier transforms and quantum phase estimation, such as needed for quantum computational chemistry. But without at least three nines of qubit fidelity, all of those additional requirements would be moot.

Traditional view of beyond NISQ

It is commonly accepted in the quantum computing sector, and apparently by Preskill himself that we will be stuck in the NISQ era until we arrive at the promised land of fault-tolerant quantum computing, the fault-tolerant era, which will require full quantum error correction (QEC).

But it is also commonly accepted in the quantum computing sector and by Preskill himself that full quantum error correction is likely to take years, likely many years.

In short, the conventional view has been that a post-NISQ era would by definition be the era of fault-tolerant quantum computing, the fault-tolerant era, which will require full quantum error correction (QEC).

Until that new era, we’re supposed to expect:

- Noisy qubits.

- Relatively simple circuits.

- Mediocre qubit connectivity, at best.

- Manual error mitigation.

- Clever design of algorithms and circuits to be more resilient to noise.

- Slow and gradual improvements in qubit quality. Gradually reducing the error rate, but still relatively noisy.

- Can’t rely on quantum Fourier transform or quantum phase estimation.

- Limited quantum advantage, if any.

- The grand promises of quantum computing will remain mostly unfulfilled.

- Never give up hope that the grand promise of full quantum error correction will address and solve all of these issues.

For more detail on quantum error correction, see my informal paper:

- Preliminary Thoughts on Fault-Tolerant Quantum Computing, Quantum Error Correction, and Logical Qubits

- https://jackkrupansky.medium.com/preliminary-thoughts-on-fault-tolerant-quantum-computing-quantum-error-correction-and-logical-1f9e3f122e71

The essential technical limitations of NISQ

NISQ has many technical limitations. The concept of NISQ itself doesn’t force these limitations, but real implementations of quantum computers according to NISQ tend to have these technical limitations.

Quantum computing has great promise, but in the near term it (and we) suffer due to these essential technical limitations:

- Qubit state is too susceptible to noise.

- Coherence time is too short. Requires circuits to be fairly short.

- Gate execution time is too long. Limits how large and complex a circuit can be executed in the coherence time.

- Operations on qubits (gates) are too unreliable.

- Measurement of qubits is too unreliable.

- Qubits have relatively low fidelity. Overall.

- Lack of full any-to-any qubit connectivity. Requires tedious and error-prone SWAP networks which further reduce the number of gates which can be executed within the coherence time.

- Lack of fine granularity of phase and probability amplitude. Precludes non-trivial quantum Fourier transforms and quantum phase estimation needed for application areas such as quantum computational chemistry.

- Large and complex algorithms are not supported. Due to the above limitations.

The negative consequences of the limitations of NISQ

The technical limitations of NISQ quantum computers result in a variety of negative consequences:

- Large and complex algorithms are not supported.

- Unable to support non-trivial quantum algorithms.

- Unable to support non-trivial quantum Fourier transforms.

- Unable to support quantum phase estimation.

- Unable to achieve any significant quantum advantage.

- Unable to demonstrate and deliver substantial business value.

- Unable to effectively exploit larger qubit counts.

- Risk of a severe Quantum Winter in a few years.

- Far too much of our expensive and best talent is being wasted trying to overcome NISQ limitations.

Qubit count is NOT an issue, at this stage

Qubit count was a big deal when we only had five, eight, ten, twelve, or sixteen qubits, but now that we have a plethora of quantum computers with 27, 32, 40, 53, 65, 80, and even 127 qubits, qubit count is never the limiting factor for designing and testing quantum algorithms.

Rather, qubit fidelity is the critical gating factor.

And lack of full any-to-any qubit connectivity is also a critical limiting factor.

Once we get past qubit fidelity and qubit connectivity being limiting factors, coherence time and gate execution time will become the critical limiting factors precluding larger and more complex quantum algorithms. And qubit count will still be unlikely to be a limiting factor.

But trapped-ion quantum computers DO need more qubits

Although qubit count is not a gating factor for superconducting transmon qubits, it is a gating factor for trapped-ion qubits, where even 32 qubits is a stretch, at this stage.

That said, trapped-ion quantum computers are likely to get to an interesting qubit count within the next couple of years, so then it will no longer be a gating factor to achieving the low end of practical quantum computing.

And quantum error correction would need a LOT more qubits

Not many qubits would be needed for a basic practical quantum computer based on the proposal of this informal paper, but full quantum error correction (QEC) will require a lot more physical qubits.

So while a a basic practical quantum computer based on the proposal of this informal paper might require only 48 physical qubits, a functionally comparable fault-tolerant quantum computer with full quantum error correction would require 48,000–48 thousand — physical qubits to achieve 48 perfect logical qubits if each perfect logical qubit required 1,000 physical qubits.

But all of that is irrelevant for the proposal of this informal paper which focuses on near-perfect qubits rather than perfect logical qubits.

For more detail on quantum error correction, see my informal paper:

- Preliminary Thoughts on Fault-Tolerant Quantum Computing, Quantum Error Correction, and Logical Qubits

- https://jackkrupansky.medium.com/preliminary-thoughts-on-fault-tolerant-quantum-computing-quantum-error-correction-and-logical-1f9e3f122e71

The grand (unfulfilled) promise of quantum error correction (QEC)

As lousy as NISQ itself is, the reason we are expected to tolerate its deficiencies is that this same hardware will become much more bearable and even usable once full quantum error correction is implemented — or the grand narrative goes, which will erase many, most, or all of the deficiencies that are commonly experienced with NISQ today. In theory, that is.

But, I don’t buy it.

After pondering, reviewing, and studying quantum error correction for a few years now, I’ve concluded that even though it may be able to deliver on some of its promises, it won’t be able to deliver on many of them in as definitive and comprehensive a manner as had been promised and expected. For details, see my informal paper:

- Why I’m Rapidly Losing Faith in the Prospects for Quantum Error Correction

- https://jackkrupansky.medium.com/why-im-rapidly-losing-faith-in-the-prospects-for-quantum-error-correction-5aed92ee7a52

It would be too depressing to even summarize my concerns here. Rest assured, full quantum error correction is not coming any time soon, it will be very expensive, and it won’t deliver on most of the promises that have been made for it.

In any case, the grand promise that quantum error correction was coming was what has kept interest and tolerance of NISQ alive. Now, that needs to change.

The path to practical quantum computing

NISQ alone will never deliver practical quantum computing on the scale that people have been led to believe and expect. Fault-tolerant quantum computing, backed by quantum error correction, was supposed to be the path to practical quantum computing. Or, so the traditional story goes.

But since I have abandoned hope for quantum error correction, that brings us to the question of whether there is some alternative path to practical quantum computing, and what that path might be.

My answer is that I have switched horses in midstream, shifting my bet for practical quantum computing from full quantum error correction to near-perfect qubits.

What is post-NISQ quantum computing?

In a nutshell, here’s the gist of the proposal for post-NISQ quantum computing envisioned by this informal paper:

- Near perfect qubits. Four to five nines of qubit fidelity. Or maybe 3.75, 3.5, or 3.25 nines will be good enough for many applications. And maybe even only three nines will be needed for some applications. 3.5 to four nines should be the primary initial target.

- Roughly 48 qubits. Enough for a lot of applications, even if some applications require more. This should be enough for a basic level of practical quantum computing. Enough to support a 20-bit quantum Fourier transform to achieve a significant quantum advantage of 1,000,000 over classical solutions.

- Full any-to-any qubit connectivity. No more expensive and error-prone SWAP networks.

- Moderately large quantum circuits. Approximately 2,500 gates.

- Moderate improvement in coherence time. Whatever it takes to meet the goal for maximum circuit size based on gate execution time.

- Moderate improvement in gate execution time. Whatever it takes to meet the goal for maximum circuit size based on coherence time.

- Reasonably fine granularity for phase and probability amplitude. Enough to support non-trivial quantum Fourier transform and quantum phase estimation (at least 20 bits.)

Seriously, that’s about it, all that’s needed.

Near-perfect qubits as the path to practical quantum computing

There is more on the path to practical quantum computing than qubits with higher fidelity, but qubits with higher fidelity, what I call near-perfect qubits, are the critical gating factor.

Other technical gating factors on the path to practical quantum computing were listed earlier, but include:

- Need for full any-to-any qubit connectivity. Critical for larger and more complex quantum algorithms.

- Need for longer coherence time. To enable larger and more complex quantum circuits.

- Need for shorter gate execution time. Enables larger and more complex quantum circuits since more gates can be executed in a given coherence time.

- Need for higher qubit measurement fidelity. Included in overall qubit fidelity, but technically distinct.

- Need for fine granularity of phase and probability amplitude. Enables quantum Fourier transform and quantum phase estimation, such as is needed for quantum computational chemistry.

But none of those key gating factors on the path to practical quantum computing will matter if we don’t first have much higher qubit fidelity, what I refer to as near-perfect qubits, in the range of four to five nines of qubit fidelity, although 3.75 or even 3.5 nines of qubit fidelity might be enough for many applications, and even 3.25 or even three nines of qubit fidelity might be enough for some applications. 3.5 to four nines should be the primary initial target.

That’s as much as you need to know about qubit fidelity for the purposes of this informal paper, but for more details, see my informal paper:

- What Is a Near-perfect Qubit?

- https://jackkrupansky.medium.com/what-is-a-near-perfect-qubit-4b1ce65c7908

What are nines of qubit fidelity?

When I say four nines of qubit fidelity, that means a qubit reliability of 99.99%.

Three nines would be 99.9% reliability.

3.5 nines would be 99.95% reliability.

98.5% reliability would be 1.85 nines.

For more on nines for qubit fidelity, see my informal paper:

3.5 to four nines should be the primary initial target for qubit fidelity

Although 3.25 nines of qubit fidelity might be sufficient for some applications and five nines may be overkill for many applications, my feeling is that the best primary initial target for a post-NISQ quantum computer should be the 3.50 to four nines range.

Of course, there may be any number of stepping stones or milestones to get to that goal. Whether any of those preliminary milestones actually constitute a post-NISQ quantum computer or practical quantum computer will be debatable, but it’s simply whether quantum algorithm designers and application developers can achieve some significant quantum advantage — approximately 1,000,000X advantage over classical solutions, such as using a 20-bit quantum Fourier transform or quantum phase estimation.

Minimal quantum advantage — 1,000X advantage over classical solutions — may or may not be acceptable, but if such an advantage delivers real business value, that might be sufficient to declare that we have achieved practical quantum computing and that we now have a post-NISQ quantum computer.

If the qubit error rate is low enough it shouldn’t be considered noisy — or NISQ

What technical criteria should be used to justify calling one qubit noisy and another qubit not noisy?

There is no definitive answer, but I’ll offer a pragmatic approach.

Ultimately, it simply comes down to whether you can run your quantum algorithm or circuit without getting either any errors at all or at least not too many errors.

We also have the option of running a quantum circuit a number of times and then looking at the statistical average, so that if the error rate is low enough, we should see a majority or plurality of results that are consistent and likely correct, even if some fraction of the results are inconsistent.

Here are some potential thresholds for qubit fidelity to achieve results that are consistent enough that we can statistically determine the likely valid result even if errors cause results to sometimes be inconsistent:

- 2.75 nines, 100 gates. Not really credible as non-noisy. Technically sure still seems still NISQ.

- Three nines, 1,000 gates. May still be too noisy, but some plausibility. Reasonably low noise. Debatable, but a plausible threshold for no longer being noisy/NISQ.

- 3.25 nines, 1,500 gates. Still a bit noisy, but acceptable for some applications. Reasonably beyond three nines seems a reasonable threshold.

- 3.5 nines 2,500 gates. Not really that noisy. Still in a gray zone near noisy/NISQ, but credible for beyond NISQ.

- 3.75 nines, 3,500 gates. Really starting to quiet down. Sure, still some noise, but may be close enough to beyond NISQ.

- Four nines, 5,000 gates. How could this still be considered noisy? Very low noise. Not credible to still consider this noisy/NISQ

- Five nines, 25,000 gates. Feels like a slam dunk for beyond noisy/NISQ to me.

- Six nines, 125,000 gates. Sure, some applications could require this fidelity, but that seems a bit extreme for many applications.

- Beyond six nines? Out of the question at this stage. Will require some fundamental breakthroughs in the physics. But as they say, hope does spring eternal.

This informal paper takes the position that being able to reliably execute quantum circuits with 2,500 gates is clearly no longer noisy or NISQ.

Whether executing 1,000 or 1,500, or 2,000 gates is required to claim non-noisiness can be debated. Some could consider some or all of those intermediate thresholds to be either still noisy or at least borderline or definitely non-noisy.

Sorry, but I don’t have any solid math or explicit reasoning for those judgments, but I don’t think that they’re unreasonable, and they’re good enough for the purposes of this informal paper, a mere modest proposal.

Circuit repetitions (shots) to compensate for lingering errors

Near-perfect qubits are not absolutely perfect, so errors will indeed occur on occasion. The simplest and maybe best approach to dealing with these lingering errors is to execute the quantum circuit a number of times — called circuit repetitions or shot count or shots — and then examine the results statistically to determine the correct result of the quantum computation.

If the error rate is low enough, we should see a majority or plurality of circuit results that are consistent and likely correct, even if some fraction of the results are inconsistent.

I also sometimes refer to this process as poor man’s error correction.

It can be effective if the error rate is low enough, but is not so effective for noisy (NISQ) qubits.

For more on this process, see my paper:

- Shots and Circuit Repetitions: Developing the Expectation Value for Results from a Quantum Computer

- https://jackkrupansky.medium.com/shots-and-circuit-repetitions-developing-the-expectation-value-for-results-from-a-quantum-computer-3d1f8eed398

Error mitigation and error suppression

Use of near-perfect qubits and my so-called poor man’s error correction (circuit repetitions and statistical averaging) may cover many cases and relatively simpler quantum algorithms, but I do expect that more advanced or complex quantum algorithms will still need to some degree of manual error mitigation or even error suppression as is done today with NISQ quantum computers.

The key point here is that the need for quantum error mitigation — and maybe even quantum error suppression — will be dramatically reduced with near-perfect qubits.

For a brief overview from IBM:

- What’s the difference between error suppression, error mitigation, and error correction?

- https://research.ibm.com/blog/quantum-error-suppression-mitigation-correction

For some deeper detail:

- Error supression and error mitigation with Qiskit Runtime

- https://qiskit.org/documentation/partners/qiskit_ibm_runtime/tutorials/Error-Suppression-and-Error-Mitigation.html

Q-CTRL has software called Fire Opal which may or may not be comparable to IBM’s approach to quantum error suppression:

- Software tools for quantum control: Improving quantum computer performance through noise and error suppression

- https://arxiv.org/abs/2001.04060

But, again, the goal of near-perfect qubits is to eliminate much of the need for such band-aid fixes. Let the vendors fix their qubits so users don’t have to jump through hoops to compensate for mediocre qubit fidelity.

But the goal of this proposal is to reduce and minimize the need for error mitigation

Yes, even with a full implementation of this proposal, some degree of quantum error mitigation or quantum error suppression may still be required, at least for some more complex quantum algorithms, but the overall goal of this proposal, focused on near-perfect qubits, is to dramatically reduce and minimize the need for such mitigation measures.

Full any-to-any qubit connectivity is essential

Lack of full any-to-any qubit connectivity is problematic, precluding larger and more complex circuits.

This requires tedious and error-prone SWAP networks which further reduce the number of gates which can be executed within the coherence time.

But trapped-ion qubits already have full qubit connectivity

Although trapped-ion quantum computers do need more qubits, the really good news is that trapped-ion qubits already implicitly have full any-to-any qubit connectivity, which is a real challenge for superconducting transmon qubits.

Coherence time and gate execution time will eventually be critical gating factors, but not yet

Once we get past qubit fidelity and qubit connectivity being limiting factors, coherence time and gate execution time will become the critical limiting factors precluding larger and more complex quantum algorithms.

Must enable larger and more complex quantum circuits to achieve practical quantum computing

This informal paper won’t go into any detail, but practical quantum computing will only be possible if we can enable larger and more complex quantum circuits and algorithms.

How large and how complex?

Well, this paper will leave that question unanswered in any definitive sense, but maximum circuit size needs to be:

- Certainly support hundreds of gates.

- Certainly support 1,000 gates.

- Certainly support 1,500 gates.

- Certainly support 2,000 gates.

- Certainly support 2,500 gates.

- Possibly support 5,000 gates.

- Maybe support 10,000 gates.

- Not likely to support 100,000 gates. At least not initially.

- Not likely to support a million gates. At least not within the scope of this proposal.

- Not likely to support millions of gates. Fantasy.

- Not likely to support billions of gates. Fantasy.

The earliest instances of practical quantum computers may certainly come in at the lower portion of that range.

Evolutionary advances of quantum computers should continually increase maximum circuit and algorithm size.

Target of 2,500 gates for maximum circuit size for a basic practical quantum computer

By my own estimation, my proposal for a 48-qubit quantum computer with fully-connected near-perfect qubits would need on the order of a maximum circuit size of 2,500 gates, primarily to support a 20-bit quantum Fourier transform.

Dozens, not hundreds, of qubits are a more practical goal for the next stage of quantum computing

IBM has already proven that they can produce a quantum computer with more than 100 qubits, and is expected to make a quantum computer with hundreds of qubits (the 433-qubit Osprey) available within the next few months, but this is all to no avail without much higher qubit fidelity, full any-to-any qubit connectivity, greater coherence time, and fine granularity of phase and probability amplitude.

Sure, we could have hundreds of near-perfect qubits in the not too distant future, and even moderately greater coherence time, but the idea of running larger and more complex quantum circuits using hundreds of qubits seems well out of the question.

Sure, coherence time can be extended moderately, and gate execution time can be shortened moderately, resulting in a significant increase in maximum circuit size, but we may be talking no more than a few thousand gates, not the tens of thousands of gates or even hundreds of thousands of gates that circuits using hundreds of qubits are likely to require.

So, for now, and the foreseeable future, we should focus on tens or dozens of high-quality qubits. Even 100 qubits may be a stretch.

Whether it is qubit fidelity or coherence time which is the actual technical gating factor is not yet clear, but either way, circuit size and complexity will be the overall limiting factor.

Some possible target qubit counts for post-NISQ quantum computing:

- 28 qubits.

- 32 qubits.

- 36 qubits.

- 40 qubits.

- 44 qubits.

- 48 qubits.

- 50 qubits.

- 56 qubits.

- 64 qubits.

- 72 qubits.

- 80 qubits.

- 96 qubits.

Whether even 96 qubits, or even 80 qubits is too much of a stretch for the next two to seven years is unclear.

I suppose that if 96 qubits is doable, then 100 qubits will be doable as well.

But I don’t see 128, 160, or 256 qubits as practical in the next two to seven years, at least in terms of quantum circuits which are using all 128, 160, or 256 qubits in reasonably large and reasonably complex circuits, which could amount to tens of thousands or even hundreds of thousands of gates.

Target 48 fully-connected near-perfect qubits as the sweet spot goal for a basic practical quantum computer

Every application and application category and user will have their own hardware requirements, but I surmise that 48 fully-connected near-perfect qubits should be enough for many applications and users to at least get started with something that shows preliminary signs of a significant quantum advantage.

For more details, see my informal paper:

- 48 Fully-connected Near-perfect Qubits As the Sweet Spot Goal for Near-term Quantum Computing

- https://jackkrupansky.medium.com/48-fully-connected-near-perfect-qubits-as-the-sweet-spot-goal-for-near-term-quantum-computing-7d29e330f625

Terminology for post-NISQ quantum computers — NPSSQ, NPISQ, and NPLSQ

Post-NISQ is too vague a characterization for a real quantum computer. I propose replacing NISQ with three alternative terms:

- NPSSQ. Near-Perfect Small-Scale Quantum computer. Less than 50 qubits.

- NPISQ. Near-Perfect Intermediate-Scale Quantum computer. From 50 to a few hundred qubits.

- NPLSQ. Near-Perfect Large-Scale Quantum computer. More than a few hundred or more than 1,000 qubits.

Whether 500 or 750 qubits would be considered still intermediate scale or should be considered large scale could be a matter of debate. Using Preskill’s model, more than a few hundred should probably be considered beyond intermediate scale.

For more details on this proposed terminology, see my informal paper:

- Beyond NISQ — Terms for Quantum Computers Based on Noisy, Near-perfect, and Fault-tolerant Qubits

- https://jackkrupansky.medium.com/beyond-nisq-terms-for-quantum-computers-based-on-noisy-near-perfect-and-fault-tolerant-qubits-d02049fa4c93

Expectations for significant quantum advantage

Quantum computing is all for naught if we can’t achieve a dramatic quantum advantage or at least a significant quantum advantage relative to classical solutions. So, the expectation for post-NISQ quantum computing — practical quantum computing — is to achieve at least significant quantum advantage.

I personally define three levels of quantum advantage:

- Minimal quantum advantage. 1,000X a classical solution.

- Substantial or significant quantum advantage. 1,000,000X a classical solution.

- Dramatic quantum advantage. One quadrillion X a classical solution. Presumes 50 entangled qubits operating in parallel, evaluating 2⁵⁰ = one quadrillion alternatives in a solution space of 2⁵⁰ values (quantum states.)

Minimal quantum advantage is not terribly interesting or exciting, and although it is better than nothing, it’s not the goal of the vision of this informal paper. Still, it could be acceptable as an early, preliminary milestone or stepping stone to greater quantum advantage.

Dramatic quantum advantage is the ultimate goal for quantum computing, but is less likely for the limited qubit fidelity envisioned by this informal paper. It may take a few more nines of qubit fidelity and 10X circuits size and coherence time to get there, so don’t expect it, at least not in the early versions of post-NISQ quantum computers.

Substantial or significant quantum advantage is a more reachable and more satisfying goal over the coming years, even if not the ultimate goal for practical quantum computing. Achieving a millionfold computational advantage over classical solutions is quite impressive, even if not as impressive as dramatic quantum advantage.

In any case, a core goal for the proposal of this informal paper is to achieve quantum advantage, and it needs to be an advantage that delivers real value to the organization.

For more on dramatic quantum advantage, see my paper:

- What Is Dramatic Quantum Advantage?

- https://jackkrupansky.medium.com/what-is-dramatic-quantum-advantage-e21b5ffce48c

For more on minimal quantum advantage and substantial or significant quantum advantage — what I call fractional quantum advantage, see my paper:

- Fractional Quantum Advantage — Stepping Stones to Dramatic Quantum Advantage

- https://jackkrupansky.medium.com/fractional-quantum-advantage-stepping-stones-to-dramatic-quantum-advantage-6c8014700c61

Must deliver substantial real value to the organization

Whatever the technical merits of this proposal, the only important metric is the extent to which a quantum solution implemented on the proposed quantum computer delivers substantial real business value to the organization deploying such a solution.

Post-NISQ quantum computing should enable The ENIAC Moment

Achieving The ENIAC Moment of quantum computing, the first moment when a quantum computer is able to demonstrate a production-scale quantum application achieving some significant level of quantum advantage, won’t require the perfect logical qubits of full quantum error correction, but won’t be possible with qubits as noisy as NISQ covers — and will require greater qubit connectivity and larger maximum circuit size than are generally available using NISQ.

But The ENIAC Moment will require near-perfect qubits, which, by definition, since they are no longer noisy, will never be available using NISQ.

Post-NISQ quantum computers will almost definitely enable The ENIAC Moment to be reached, but NISQ alone has no chance of achieving that milestone.

For more on The ENIAC Moment of quantum computing, see my paper:

- When Will Quantum Computing Have Its ENIAC Moment?

- https://jackkrupansky.medium.com/when-will-quantum-computing-have-its-eniac-moment-8769c6ba450d

This proposal is independent of the qubit technology

This proposal should apply equally well to all general-purpose qubit technologies, including:

- Superconducting transmon qubits.

- Trapped-ion qubits.

- Semiconductor spin qubits.

- Other qubit technologies. Provided that they are general purpose.

But the focus of this proposal is on general-purpose quantum computers, not more specialized forms of quantum computing. At present, the latter includes:

- Quantum annealing systems.

- Neutral-atom qubits. Running in analog mode.

- Photonic quantum computers. Including boson sampling devices.

Timeframe of next two to seven years

When might the practical quantum computers envisioned by this informal paper become available? Simple answer: No really good idea. But nominally let’s say we’re looking at the two to seven-year timeframe.

We really hope that the early versions of the post-NISQ quantum computers envisioned by this informal paper can be ready within two to three or four years, but those early versions might still have too low a qubit fidelity or support only limited circuit size to support fully practical quantum applications for many applications and application categories.

Or, maybe the early versions available in two to four years are great for a wide range of quantum applications, but still not acceptable for a number of the key, critical applications and application categories of interest, which may take a couple more years, delaying from three years to five or six or seven years for tougher applications.

And undoubtedly, there will still be plenty of super-tough applications and application categories which will need capabilities beyond even those envisioned by this informal paper, such as needing six nines of qubit fidelity and 50,000-gate circuits.

Multiple stages of post-NISQ quantum computing

As highlighted by a preceding section, the initial version envisioned by this paper will unlikely be sufficient for tougher applications, so the grand vision of post-NISQ quantum computing, still well short of full quantum error correction, would likely be stages.

I’ll just suggest a few potential stages, not intending to lay out a detailed and concrete roadmap or plan, but simply to highlight the range of possibilities. Actually, I’ll list stages in the three most critical technical factors, without suggesting how the timing or pairing up of factors might occur.

The three critical factors:

- Qubit fidelity.

- Maximum circuit size.

- Qubit count.

Stages for qubit fidelity:

- Three nines.

- 3.25 nines.

- 3.50 nines.

- 3.75 nines.

- Four nines.

- 4.25 nines.

- 4.50 nines.

- 4.75 nines.

- Five nines.

- 5.25 nines.

- 5.50 nines.

- 5.75 nines.

- Six nines.

Stages for maximum circuit size:

- 500 gates.

- 750 gates.

- 1,000 gates.

- 1,500 gates.

- 2,000 gates.

- 2,500 gates.

- 3,500 gates.

- 5,000 gates. Not in initial versions.

- 7,500 gates. Not in initial versions.

- 10,000 gates. Not in initial versions.

- 25,000 gates. Not so likely until later.

- 50,000 gates. Not so likely until later.

- Not likely to support 100,000 gates.

- Not likely to support a million gates.

- Not likely to support millions of gates.

- Not likely to support billions of gates.

And in parallel with those three critical factors we would have stages for qubit count, which depend on progress in the three critical factors:

- 28 qubits.

- 32 qubits.

- 36 qubits.

- 40 qubits.

- 44 qubits.

- 48 qubits.

- 50 qubits.

- 56 qubits.

- 64 qubits.

- 72 qubits.

- 80 qubits. Might be problematic — for maximum circuit size.

- 96 qubits. A real stretch — for maximum circuit size.

- 100 qubits. Maybe.

- More than 100 qubits. Not likely — due to maximum circuit size.

How long might the post-NISQ era last? Until it runs out of steam

Nominally the post-NISQ era is characterized by at least four to five nines of qubit fidelity. But that’s intended as more of a lower bound than an upper limit.

We will still be in the post-NISQ era even if qubit fidelity reaches six or even seven nines, or even higher.

So what would mark the end of the post-NISQ era then? There is no definitive mark or milestone to mark the end of the post-NISQ era. It just keeps going and going — hopefully.

Of course, it would be no surprise if at some point qubit fidelity finally hits a wall and even best efforts are not able to push it any higher. That would indeed mark the end of the post-NISQ era.

Of course, incremental but minor improvements could still occur even after qubit fidelity tops out, but it wouldn’t make sense to claim that the post-NISQ era is making any great progress at that stage.

But, qubit fidelity is only one of the critical technical gating factors for progress in post-NISQ quantum computing — we also have coherence time, gate execution time, maximum circuit size, and fine granularity of phase and probability amplitude, any of which could also mark an end to the post-NISQ era if progress in any of those technical gating factors stalls.

What might come after the post-NISQ era? Unknown

And if the post-NISQ era does come to an end or technical wall, such as inability to push qubit fidelity or maximum circuit size higher, what might come next? The answer is that it is completely unknown at this stage.

Some possibilities:

- Some more practical design for quantum error correction.

- Some new qubit technology.

- Some new quantum computing architecture.

- Some new quantum computing programming model.

- Some completely different computing technology.

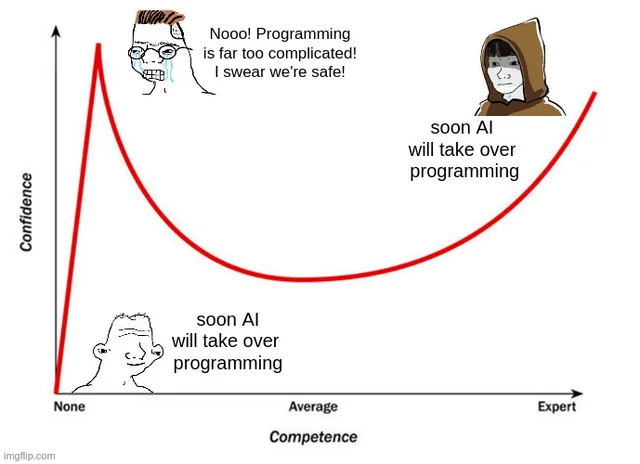

What about software and tools for developers?

Quantum infrastructure software as well as tools for designers of quantum algorithms and developers of quantum applications are important as well, but I don’t assess them as being critical and problematic gating factors in the long march to practical quantum computing.

Software may indeed be hard, but hardware is really hard. This informal paper focuses on the really hard problems.

Software and tools are a relatively manageable task while improving quantum hardware is a monstrous technical challenge, requiring advances for both science and engineering.

That doesn’t mean that software and tools will be trivial or easy, just that they are not even close to being major sources of concern, as is the case with hardware.

Besides, my main concern on the software and tools fronts is that we may be overtooling — wasting too much energy, resources, and attention on software and tools simply to compensate for current deficiencies in the hardware, which the proposal of this informal paper seeks to rectify.

What to do until post-NISQ quantum computers arrive? Use a simulator up to 32 to 50 qubits

So, what are quantum algorithm designers and quantum application developers supposed to do until we get to a post-NISQ practical quantum computer based on near-perfect qubits? The simple answer is to use a classical quantum simulator configured to match what is expected for a basic practical quantum computer.

There are actually a bunch of activities that can be pursued while waiting for post-NISQ quantum computers to arrive:

- Focus on simulation of scalable algorithms.

- Harangue hardware vendors to prioritize the technical requirements for post-NISQ quantum computing. Just tell them (repeatedly!) to read this paper — and just do it.

- Focus on advanced algorithm design. Kick the addiction to NISQ-driven perversions of good algorithm design.

- Focus on designing great quantum algorithms, not mediocre ones.

- Focus on analysis tools to support scalable quantum algorithms.

What to avoid while waiting for post-NISQ quantum computers

- Avoid using real NISQ hardware. Stick to simulators.

- Any and all NISQ-related efforts. Papers, talks, conferences, seminars, books.

- Focusing any attention on full quantum error correction efforts.

- Variational methods. Wait for quantum Fourier transform and quantum phase estimation.

- Anything that doesn’t have significant quantum advantage as its primary goal.

- Anything that is unlikely to deliver substantial real business value to users.

Risk of a severe Quantum Winter in a few years

There is no significant chance for a so-called Quantum WInter if the proposal envisioned in this informal paper is implemented over the next two to three years, at least the initial stages.

But failure to implement this proposal or something comparable has a high risk of leading to a severe Quantum Winter two to three years from now if people — investors and corporate decision-makers — don’t feel that quantum computing is either delivering or close to delivering on a reasonable fraction of its many and grand promises. Or if the technical promises are in fact met but the quantum solution just doesn’t deliver substantial real business value that was expected or is acceptable to the organization.

For more on Quantum Winter, see my paper:

- Risk Is Rising for a Quantum Winter for Quantum Computing in Two to Three Years

- https://jackkrupansky.medium.com/risk-is-rising-for-a-quantum-winter-for-quantum-computing-in-two-to-three-years-70b3ba974eca

Physical qubits, not perfect logical qubits

Perfect logical qubits will still require full quantum error correction, so the near-perfect qubits proposed in this informal paper would still be raw physical qubits, not perfect logical qubits.

Although, at some stage, maybe six nines or maybe even just five nines of qubit fidelity, the qubit fidelity may be perceived as so good for modest to moderate-sizes quantum algorithms and circuits that people might treat them as if they were perfect logical qubits.

Is near-perfect still noisy (NISQ) or truly post-NISQ?

Well, depending on who you talk to and depending on how committed you are to associating post-NISQ with fault-tolerant quantum computing and full quantum error correction, my proposed near-perfect qubits with only four nines (or 3.5 nines) of qubit fidelity could still be considered noisy or not. A purist might conclude that my proposal in this informal paper is still NISQ.

My thinking is that noisy really means that there are so many errors that the results of a quantum computation are not usable or only marginally usable. So, if my proposed near-perfect qubits have so few errors that the results are usually or at least very frequently usable, then that’s a noise level that is low enough to consider it not to be noisy.

Although the target of my proposed near-perfect qubits is four to five nines of qubit fidelity, I also allow for the possibility that 3.75, 3.5, 3.25, or maybe even three nines could be acceptable for at least some applications. But below three nines would indeed be generally considered to be too noisy, and hence still NISQ.

In short, I don’t expect everyone to agree with my thinking and conclusion, but I do think it is very credible to assert that my proposed near-perfect qubits would in fact signal the onset of the post-NISQ era — assuming the other requirements detailed in this informal paper are also met.

Potential for minimalist quantum error correction

Although full quantum error correction is extremely expensive — upwards of 1,000 physical qubits or more, there’s no law that says quantum error correction must be that extreme. Or even full per se.

Even with full quantum error correction using 1,000 physical qubits for each perfect logical qubit, there will still be some tiny residual error.

If you can tolerate a residual error well above the tiny residual error of full quantum error correction, a dramatically smaller number of physical qubits are required for each logical qubit.

The critical factor is called d, the distance in a surface code. Read my paper on fault-tolerant quantum computing, quantum error correction, and logical qubits for details.

Perfect logical qubits using 1,000 physical qubits use a d of 29 or 31.

Square d to get the approximate number of physical qubits required for each logical qubit.

But d can be as small as 3 or 5 (always odd), which doesn’t improve qubit fidelity very much, but only requires 9 (3²) or 25 (5²) physical qubits per logical qubit.

So, 1,000 physical qubits could enable 111 or 40 logical qubits respectively rather than the single logical qubit with full quantum error correction with d of 29 or 31.

Granted, it doesn’t correct errors as well as full quantum error correction with 1,000 physical qubits per logical qubit, but near-perfect qubits avoid many of the errors anyways, and a little boost in error correction could deliver some interesting added-value.

This is a very interesting possibility.

This is well beyond the scope of this informal paper, but worthy of additional research.

On the flip side, if qubit fidelity of near-perfect qubits is as good as proposed in this informal paper, then the minor added boost of minimalist quantum error correction might be worth all of the extra effort.

But the flip side of the flip side is that some very interesting quantum algorithms and applications may be pushing just past the limits of near-perfect qubits and could actually benefit greatly from even a relatively minor boost in qubit fidelity.

Future for NISQ is indeed bleak

No matter how you slice or dice it, NISQ quantum computers do not have a bright future.

But, that is based in large part on the fact that NISQ is predicated on the qubits being noisy.

There is nothing in any of the current qubit technologies that limits them to always being noisy, so research and engineering advances could indeed render qubits in any of these qubit technologies less noisy enough to be no longer considered noisy at all, in which case they would no longer be considered NISQ.

NISQ is dead, NISQ is a dying dead end, and NISQ no longer has a bright future or any potential for being a practical quantum computer

I will go so far as to say that NISQ not only has a bleak future, but really is dead. Dead in the sense of not being worthy of our attention for actually trying to run practical quantum algorithms and applications.

If you want to do any interesting quantum algorithms and applications today, your best bet is to do them on simulators. That’s not a full solution, but it is the best you can do as long as we are stuck in the NISQ era.

NISQ is a dead end and its days (okay, months or maybe years) are numbered — NISQ truly is dying.

There is no bright future for NISQ.

But there may indeed be a bright future for at least some of the qubit technologies currently being used or considered for NISQ qubits. Some of those qubit technologies may indeed still have a bright future — but only if they evolve or break out to become post-NISQ qubits with at least somewhat better than the current under-three nines of qubit fidelity.

Sure, there may be some special cases or niche applications which actually can be useful on NISQ quantum computers. Maybe. Theoretically. Hypothetically. But I am not currently aware of any — other than limited or stripped-down prototypes, although they may be possible. But a few good apples don’t un-spoil the bad apples in the barrel. Even with a few good NISQ quantum applications, the truth is that most quantum algorithms and quantum applications won’t achieve practical quantum computing on NISQ hardware.

Overall, NISQ does not currently deliver on even a small fraction of the many grand promises that have been made for quantum computing — and never will.

Only post-NISQ quantum computing has the potential — even promise — to deliver on at least some reasonable fraction of the many grand promises that have been made for quantum computing.

Conclusion

- Current NISQ quantum computers are not well-suited for practical quantum computing.

- Post-NISQ is practical quantum computing. By definition.

- Full quantum error correction is impractical in the near term. And maybe even in the longer term.

- Near-perfect qubits are a critical gating factor to achieving practical quantum computing.

- Dramatically reduces and minimizes the need for error mitigation.

- Three nines of qubit fidelity appears to be the practical limit for NISQ, even a barrier.

- Three nines of qubit fidelity may be the gateway to post-NISQ quantum computing.

- Full any-to-any qubit connectivity is essential.

- Larger and more complex quantum circuits and quantum algorithms must be supported.

- Longer coherence time is essential.

- Shorter gate execution time is essential.

- Higher qubit measurement fidelity is essential.

- Fine granularity of phase and probability amplitude is essential. Especially to support non-trivial quantum Fourier transforms and quantum phase estimation, such as is needed for quantum computational chemistry.

- 3.5 nines of qubit fidelity, fully connected, and able to execute 2,500 gates should be a good start for practical quantum computing.

- Replace NISQ with NPSSQ, NPISQ, and NPLSQ to be post-NISQ. All using near-perfect qubits (NP), and representing small scale (SS — less than 50 qubits), intermediate scale (IS — 50 to hundreds of qubits), and large scale (LS — over 1,000 qubits) qubit counts.

- Expect significant quantum advantage. 1,000,000X a classical solution.

- 48 fully-connected near-perfect qubits may be the sweet spot goal for a basic practical quantum computer.

- Must deliver substantial real value to the organization. The technical metrics don’t really matter.

- Post-NISQ quantum computing should enable The ENIAC Moment. The first moment when a quantum computer is able to demonstrate a production-scale quantum application achieving some significant level of quantum advantage.

- This proposal is independent of the qubit technology. Should apply equally well to superconducting transmon qubits, trapped-ion qubits, semiconductor spin qubits, or other qubit technologies, but the focus is on general-purpose quantum computers, not more specialized forms of quantum computing. At present, the latter includes quantum annealing systems, neutral-atom qubits, and photonic quantum computers, including boson sampling devices.

- Timeframe of next two to seven years.

- What to do until then? Use a simulator up to 32 to 50 qubits.

- How long might the post-NISQ era last? Until it runs out of steam.

- What might come after the post-NISQ era? Unknown.

- This proposal wouldn’t enable all envisioned quantum applications, but a reasonable fraction, a lot better than current and near-term NISQ quantum computers.

- And as qubit fidelity, coherence time, and gate execution time continue to evolve in the coming years, incrementally more quantum applications would be supported.

- Future for NISQ is indeed bleak.

- NISQ is dead, NISQ is a dying dead end, and NISQ no longer has a bright future or any potential for being a practical quantum computer.

- Let’s go post-NISQ quantum computing! Based on near-perfect qubits, not full quantum error correction.

For more of my writing: List of My Papers on Quantum Computing.